Archived seminars in MathematicsSeminars 101 to 150 | Previous 50 seminars Next 50 seminars |

Scotland Leman

Virginia Tech, USADate: Tuesday 8 November 2016

In this talk I will primarily discuss the Multiset Sampler (MSS): a general ensemble based Markov Chain Monte Carlo (MCMC) method for sampling from complicated stochastic models. After which, I will briefly introduce the audience to my interactive visual analytics based research.

Proposal distributions for complex structures are essential for virtually all MCMC sampling methods. However, such proposal distributions are difficult to construct so that their probability distribution match that of the true target distribution, in turn hampering the efficiency of the overall MCMC scheme. The MSS entails sampling from an augmented distribution that has more desirable mixing properties than the original target model, while utilizing a simple independent proposal distributions that are easily tuned. I will discuss applications of the MSS for sampling from tree based models (e.g. Bayesian CART; phylogenetic models), and for general model selection, model averaging and predictive sampling.

In the final 10 minutes of the presentation I will discuss my research interests in interactive visual analytics and the Visual To Parametric Interaction (V2PI) paradigm. I'll discuss the general concepts in V2PI with an application of Multidimensional Scaling, its technical merits, and the integration of such concepts into core statistics undergraduate and graduate programs.

Ivor Cribben

University of AlbertaDate: Wednesday 19 October 2016

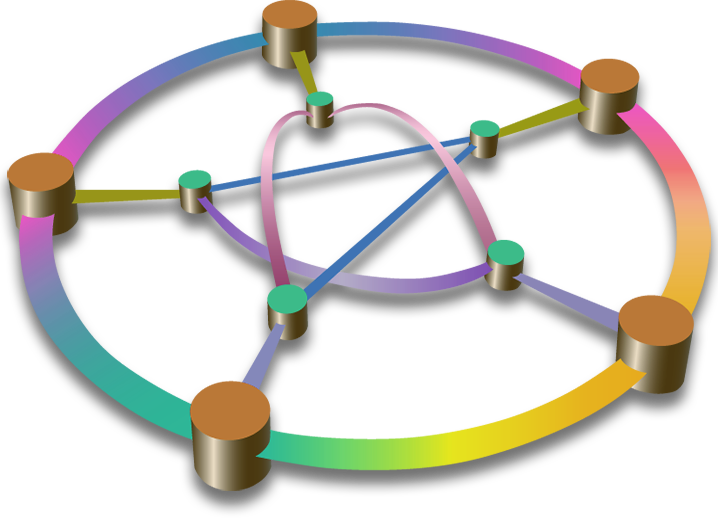

Spectral clustering is a computationally feasible and model-free method widely used in the identification of communities in networks. We introduce a data-driven method, namely Network Change Points Detection (NCPD), which detects change points in the network structure of a multivariate time series, with each component of the time series represented by a node in the network. Spectral clustering allows us to consider high dimensional time series where the number of time series is greater than the number of time points. NCPD allows for estimation of both the time of change in the network structure and the graph between each pair of change points, without prior knowledge of the number or location of the change points. Permutation and bootstrapping methods are used to perform inference on the change points. NCPD is applied to various simulated high dimensional data sets as well as to a resting state functional magnetic resonance imaging (fMRI) data set. The new methodology also allows us to identify common functional states across subjects and groups. Extensions of the method are also discussed. Finally, the method promises to offer a deep insight into the large-scale characterisations and dynamics of the brain.

Richard Norton

Department of Mathematics and StatisticsDate: Tuesday 18 October 2016

I analyse the efficiency of Metropolis-Hastings algorithms with stochastic autoregressive proposals. These include many existing methods, such as the Metropolis-Adjusted Langevin Algorithm (MALA), the preconditioned Crank-Nicolson algorithm (pCN) and the Hybrid Monte Carlo algorithm (HMC). Previously, each of these algorithms required their own separate analyses. Using my analysis I can extend what is known about these algorithms as well as analysing new algorithms.

John Tipton

Colorado State UniversityDate: Tuesday 18 October 2016

Many scientific disciplines have strong traditions of developing models to approximate nature. Traditionally, statistical models have not included scientific models and have instead focused on regression methods that exploit correlation structures in data. The development of Bayesian methods has generated many examples of forward models that bridge the gap between scientific and statistical disciplines. The ability to fit forward models using Bayesian methods has generated interest in paleoclimate reconstructions, but there are many challenges in model construction and estimation that remain.

I will present two statistical reconstructions of climate variables using paleoclimate proxy data. The first example is a joint reconstruction of temperature and precipitation from tree rings using a mechanistic process model. The second reconstruction uses microbial species assemblage data to predict peat bog water table depth. I validate predictive skill using proper scoring rules in simulation experiments, providing justification for the empirical reconstruction. Results show forward models that leverage scientific knowledge can improve paleoclimate reconstruction skill and increase understanding of the latent natural processes.

Benjamin Fitzpatrick

Queensland University of TechnologyDate: Monday 17 October 2016

When making inferences concerning the environment, ground truthed data will frequently be available as point referenced (geostatistical) observations accompanied by a rich ensemble of potentially relevant remotely sensed and in-situ observations.

Modern soil mapping is one such example characterised by the need to interpolate geostatistical observations from soil cores and the availability of data on large numbers of environmental characteristics for consideration as covariates to aid this interpolation.

In this talk I will outline my application of Least Absolute Shrinkage Selection Opperator (LASSO) regularized multiple linear regression (MLR) to build models for predicting full cover maps of soil carbon when the number of potential covariates greatly exceeds the number of observations available (the p > n or ultrahigh dimensional scenario). I will outline how I have applied LASSO regularized MLR models to data from multiple (geographic) sites and discuss investigations into treatments of site membership in models and the geographic transferability of models developed. I will also present novel visualisations of the results of ultrahigh dimensional variable selection and briefly outline some related work in ground cover classification from remotely sensed imagery.

Key references:

Fitzpatrick, B. R., Lamb, D. W., & Mengersen, K. (2016). Ultrahigh Dimensional Variable Selection for Interpolation of Point Referenced Spatial Data: A Digital Soil Mapping Case Study. PLoS ONE, 11(9): e0162489.

Fitzpatrick, B. R., Lamb, D. W., & Mengersen, K. (2016). Assessing Site Effects and Geographic Transferability when Interpolating Point Referenced Spatial Data: A Digital Soil Mapping Case Study. https://arxiv.org/abs/1608.00086

Chris Linsell

College of EducationDate: Tuesday 6 September 2016

Miguel Moyers-Gonzalez

University of CanterburyDate: Tuesday 23 August 2016

Melissa Tacy

Australian National UniversityDate: Tuesday 23 August 2016

Semiclassical analysis arose as a set of techniques for studying the high energy (or semiclassical) limit of quantum mechanics. These techniques have the advantage that intuition derived from the quantum-classical correspondence principle can guide our technical development. In this talk I will introduce some of the key techniques and discuss results such as the $L^{p}$ growth for products of Laplacian eigenfunctions and high energy phase space concentration estimates.

Fabien Montiel

Department of Mathematics and StatisticsDate: Monday 22 August 2016

In a one-dimensional homogeneous medium, linear wave scattering by an array of inclusions, e.g. beads on a string, can be reduced to a multiple reflection/transmission problem, in which the reflected and transmitted waves by an inclusion become incident waves on the adjacent inclusions. Under time-harmonic conditions, fast iterative methods can be used to obtain the solution of this class of scattering problems. In a two-dimensional medium, however, such methods cannot be directly extended as there is no natural way of uniquely ordering a finite number of arbitrarily positioned inclusions, e.g. circles, in the plane. A semi-analytical method was devised to solve deterministically the scattering of time-harmonic waves by a large finite array of inclusions in two dimensions. The method consists of clustering the inclusions into adjacent parallel slabs. The solution is obtained by combining plane wave expansions of the scattered field by each slab and a fast iterative technique for slab-slab interactions similar to the one-dimensional method mentioned above.

In this talk, I will describe this so-called slab-clustering method (SCM) and demonstrate how it provides a convenient framework to analyse the evolution of a multi-directional wave field through a large random array of inclusions. I will consider several applications of the methods in acoustics and water waves science. In particular, I will discuss some model predictions based on the SCM that generated key insights into the directional properties of water wave fields propagating in ice-covered oceans.

Mark Flegg

Monash UniversityDate: Wednesday 17 August 2016

Biological cells are the fundamental building blocks of life. At a molecular level, a cell operates according to the hard mathematical laws of physics and chemistry. Encoded in the network of molecular interactions are robust mechanisms which collectively determine the properties of life itself. Mathematical insight into cell scale behaviour is fundamentally limited by the computational scalability and convergence of mathematical frameworks that are used to describe physical systems at molecular scales (both spatial and temporal). In this presentation, I will highlight the main problems with classical mathematical approaches used to study intracellular spatio-temporal environments and present multiscale methods I have developed in the last 5 years which have allowed for improved accuracy, and efficiency. The objective of this research is to lay mathematical foundations for progress in the highly interdisciplinary mission of whole cell simulation at the level of individual molecules, a goal which has been termed a `Grand Challenge of the 21st Century'. The mathematical content of this talk is rather varied, as is the nature of applied mathematics. This research draws on partial differential equation theory, perturbation theory, N-body theory, random walks and stochastic processes as well as a number of miscellaneous areas of mathematics.

Chris Stevens

Department of Mathematics and StatisticsDate: Tuesday 16 August 2016

The CFEs are a different mathematical representation of Einstein's field equations that allow one to study “infinity” of a space-time without any sort of limiting procedure. This is of interest as in general relativity infinity is the only place that energy is well defined.

In this talk, the main ideas of the CFEs will be discussed, along with the issues associated with forming an IBVP for them. A framework for the IBVP will be presented and numerical evidence of its success will be given. As an application I will discuss the problem of shooting a gravitational wave into a black hole. In particular, I will discuss how the IBVP is formulated for this situation and how to calculate the so-called "Bondi-energy" at infinity. The resulting expression is found to reproduce the famous Bondi-Sachs mass loss.

Petru Cioica-Licht

Department of Mathematics and StatisticsDate: Monday 15 August 2016

Stochastic partial differential equations (SPDEs, for short) are mathematical models for evolutions in space and time, which are influenced by noise. They are aimed at describing phenomena in physics, chemistry, epidemiology, economics, and many other disciplines. Although we can prove existence and uniqueness of a solution to various classes of such equations, in general, we do not have an explicit representation of this solution. Thus, in order to make those models ready to use for applications, we need efficient numerical methods for approximating their solutions. And to determine the efficiency of an approximation method, we usually need to analyse the regularity of the target object, which is, in our case, the solution of the SPDE.

The aim of this talk is to present some recent results concerning the regularity of SPDEs and to point out their relevance for the question of developing efficient numerical methods for solving these equations. Before doing this, we first explain the meaning of the different parts of a typical SPDE. For simplicity, we focus on the most basic example, the stochastic heat equation driven by a (cylindrical) Wiener process. It arises from the common deterministic heat equation if we add what is called 'white noise'.

Kay Jin Lim

Nanyang Technological UniversityDate: Wednesday 3 August 2016

This is a joint work with Susanne Danz.

Note day, time and venue of this special seminar

Igor Klep

University of AucklandDate: Thursday 9 June 2016

Richard Norton

Department of Mathematics and StatisticsDate: Thursday 2 June 2016

Honours and PGDip students

Department of Mathematics and StatisticsDate: Friday 27 May 2016

Michel de Lange :Deep learning

Georgia Anderson : Probabilistic linear discriminant analysis

Nick Gelling : Automatic differentiation in R

15-MINUTE BREAK 2.40-2.55

MATHEMATICS

Alex Blennerhassett : Toeplitz algebra of a directed graph

Zoe Luo : Wavelet models for evolutionary distance

Xueyao Lu : Making sense of the λ-coalescent

Terry Collins-Hawkins : Reactive diffusion in systems with memory

Josh Ritchie : Linearisation of hyperbolic constraint equations

Also

CJ Marland : Extending matchings of graphs: a survey

This one mathematics project presentation takes place at 12 noon on Thursday 26 May, room 241

Mike Hendy

Date: Thursday 12 May 2016

In this seminar I will reflect on the role that each of these 4 problems had in my own career as a researcher in mathematics and give an outline of each problem. I hope others might also see that "playing" with such problems could be useful in motivating and training future mathematics researchers.

Petru Cioica-Licht

Department of Mathematics and StatisticsDate: Thursday 28 April 2016

The aim of this talk is to present some recent results concerning the regularity of SPDEs and to point out their relevance for the question of developing efficient numerical methods for solving these equations. Before doing this, we first explain the meaning of the different parts of a typical SPDE. For simplicity, we focus on the most basic example, the stochastic heat equation driven by a (cylindrical) Wiener process. It arises from the common deterministic heat equation if we add what is called `white noise'.

Julia Gog

University of Cambridge; NZMS Forder LecturerDate: Tuesday 5 April 2016

Detailed medical insurance claims data from the US in 2009 allow us to explore the spatial dynamics of a pandemic in greater depth than ever before. This talk will outline what we observed in terms of spatial and temporal dynamics of the pandemic in the US. Modelling work allows us to test hypothesis on the importance of different factors such as whether schools were in session, climate and city population size, to see which were important in determining the dynamics of disease spread.

Here I will also show results from ongoing studies with collaborators and some of the challenges. We have very fine-grained spatial data, and clearly we would like to us this but disaggregating too far leaves us with little signal. With fitted models and a bit of mathematical creativity, we can infer likely transmission routes during the pandemic and hypothesize what the phylogeography (spatial distribution of viral variants) might look like. Finally, looking at different age groups separately reveal a little more about why the pandemic wave was so slow.

Julia Gog

University of Cambridge; NZMS Forder LecturerDate: Monday 4 April 2016

Mathematics is an essential tool for helping us understand and control infectious diseases, from the scale of a single virus particle through to a global pandemic. Using detailed data and the toolkit of mathematical modelling,we explore the 2009 influenza pandemic at a greater depth than was possible for any previous pandemic. The results are surprising. We know the modern world is astonishingly well connected internationally so things should spread quickly. However, influenza does not like to conform to our expectations!

Mark Kayll

University of MontanaDate: Thursday 24 March 2016

Jörg Frauendiener

Date: Wednesday 23 March 2016

In 1916 Einstein predicted on the basis of his new theory of general relativity that gravitational waves should exist. Since the early 1960s scientists tried to measure them but the search has been unsuccessful until very recently. On the 14th of September 2015 the two LIGO detectors measured a gravitational wave signal which could only have come from a binary black hole system. What does this measurement mean for science and for us?

Francesc Fàbregas Flavià

École Centrale de NantesDate: Thursday 17 March 2016

Such models have been developed as specialized software, generally using Boundary Element Methods (BEM), in the framework of the theory of potential flow for the description of wave/device interaction. They are globally efficient for the optimization of one device alone or a small group of devices under simplified and rather idealized conditions.

But now as we advance towards application to real cases of multiMW farms featuring, for instance, O(100) machines, these models can no longer be used for optimization and a new generation of fast-running computer codes must be developed.

Gordon Hiscott

Department of Mathematics and StatisticsDate: Thursday 3 March 2016

Hyuck Chung

Auckland University of TechnologyDate: Tuesday 17 November 2015

Project presentations, Maths honours students

Department of Mathematics and StatisticsDate: Friday 23 October 2015

2.25 : Pareoranga Luiten-Apirana, Morita equivalence of Leavitt path algebras

2.50 : Tom McCone, Primitive ideals in graph algebras

Dimitrios Mitsotakis

Victoria University of WellingtonDate: Tuesday 6 October 2015

Robert Calderbank

Duke UniversityDate: Tuesday 29 September 2015

Ingrid Daubechies, AMS-NZMS 2015 Maclaurin Lecturer

Duke UniversityDate: Monday 28 September 2015

Ingrid Daubechies, AMS-NZMS 2015 Maclaurin Lecturer

Duke UniversityDate: Monday 28 September 2015

Craig Bauling and Gerrard Liddell

Wolfram research, Mathematics and Statistics DepartmentDate: Friday 25 September 2015

Charles Semple

University of CanterburyDate: Tuesday 22 September 2015

Jörg Frauendiener

Department of Mathematics and StatisticsDate: Tuesday 15 September 2015

Nicola Gaston

Victoria University of WellingtonDate: Tuesday 25 August 2015

It is not news to anyone working in scientific or STEM fields that the proportion of men and women in these careers is out of balance. Outreach targeted at encouraging young women into STEM careers has become rather fashionable, and discussion of both the numeric imbalances [1] and suggested explanations for the gender disparity [2] have become increasingly accessible. This talk will outline published literature on both the data that demonstrates gender disparity [3,4] and behavioural studies [5] that begin to explain why gender disparity in the sciences is persistent, with the aim of increasing general understanding of a rather complex issue. It will conclude with a discussion of what needs to change in order for gender equality to improve across the board, and discuss new studies that give insight into why some scientific disciplines, such as physics and mathematics, seem to perform so much worse than other disciplines such as biology in this respect.

[1] Nature 495, 5, 2224, 25-27, 28-31, (07 March 2013)

[2] The Economist, Promotion and Self-Promotion, (31 August 2013)

[3] Guiso, L.; Monte, F.; Sapienza, P.; Zingales, L. 2008. Culture, gender, and math. Science 320:11641165.

[4] Knobloch-Westerwick, S.; Glynn, C.J.; Huge, M. 2013. The Matilda Effect in science communication: An experiment on gender bias in publication quality perceptions and collaboration interest. Science Communication 35: 603625

[5] Moss-Racusin, C.A.; Dovidio, J.F.; Brescoll, V.L.; Graham, M.J.; Handelsman, J. 2012. Science faculty's subtle gender biases favor male students. Proceedings of the National Academy of Sciences 109:1647416479.

Thomas Forster

Cambridge UniversityDate: Monday 17 August 2015

The Axiom of Choice is probably the cause of more anxiety and unproductive disputation than any other proposition of pure mathematics. There is probably a majority of mathematicians who profess to believe it, but it's only a minority (even of the believers) who can state it correctly, and for most mathematicians its purpose and meaning are shrouded in smog. This talk is a backgrounder for a “General Pure'' audience (not logicians!). Technicalities will be kept to an absolute minimum.

Dougal McQueen

University of CanterburyDate: Tuesday 11 August 2015

Misi Kovács

Department of Mathematics and StatisticsDate: Tuesday 28 July 2015

David Bryant

Department of Mathematics and StatisticsDate: Tuesday 21 July 2015

Note time and venue

Johannes Mosig

Department of Mathematics and StatisticsDate: Tuesday 21 July 2015

The sea ice extent is correlated with ocean waves, generated by storms which have become stronger and more frequent in the past decades. My PhD project aims to contribute to our understanding of these interactions between water waves and sea ice. In the present talk I will present the current state of this project. The first part is concerned with viscoelastic continuum models. More recently I began to investigate the influence of the shape of ice floes on their scattering behavior. I will briefly introduce the idea of generalized polynomial chaos (gPC) which I want to use to model the scattering of water waves from a random-shaped ice floe.

Graeme Wake

Massey University AucklandDate: Tuesday 2 June 2015

Successfully implemented in 20+ countries worldwide, these intensive week-long workshops offer a collaborative environment to solve problems arising in industry. Scientists participate from a range of mathematical disciplines such as dynamic systems, statistics, and operational research.

This unconventional model sees companies paying $6000 each up-front for the rare opportunity to have their meatiest challenges tackled by mathematicians from across the country. Support for students to come; post workshop contracts possible; and employment possibilities may arise.

2. Non-local calculus is a sorely neglected topic in the under-graduate, or even the graduate, curriculum. These problems arise when cause and effect are separated by time or space. They come from important applications surprisingly often. Usually the solution possesses a rich structure missing in the classical local models.

Examples will be given with emphasis on the highly valued rye-grass/clover mixtures model, often quoted as “one of the best pieces of science to come from AgResearch-NZ’s largest Crown Research Institute”, as stated by Dr Andy West, ex-CEO.

Honours and PGDip students

Department of Mathematics and StatisticsDate: Friday 22 May 2015

Yunan Wang: Binary segmentation for change-point detection in GPS time series

Patrick Brown: Investigating dynamic time series models to predict future tourism demand in New Zealand

Alastair Lamont: Hierarchical modelling approaches to estimate genetic breeding values

Lyco Wen: Effects of gene by environment interaction on hyperuricemia and related gout risk

15-MINUTE BREAK 2.20-2.35

MATHEMATICS

Callum Nicholson: Wavelets and direct limits

Pareoranga Luiten-Apirana: Morita eqivalence of Leavitt path algebras

Tom McCone: Primitive ideals in graph algebras

Naomi Ingram

College of EducationDate: Tuesday 19 May 2015

Vivien Kirk

University of AucklandDate: Tuesday 5 May 2015

A common first step in the analysis of such a model is to simplify the model by identifying and eliminating variables that evolve over the fastest or slowest timescales. Model simplification of this type can sometimes be rigorously justified, but in many cases it is done in an ad hoc way and results in important features of model dynamics being destroyed.

This talk will describe some recent results about simplification techniques for models with multiple timescales, pointing out common pitfalls and identifying conditions under which simplifications might be mathematically justified.

Monika Balvočiūtė

Department of Mathematics and StatisticsDate: Thursday 23 April 2015

Endre Süli

University of Oxford; NZMS Forder LecturerDate: Friday 17 April 2015

We shall survey recent analytical and computational results for coupled macroscopic-microscopic bead-spring chain models that arise from the kinetic theory of dilute solutions of incompressible polymeric fluids with noninteracting polymer chains, involving the unsteady Navier-Stokes system in a bounded domain and a high-dimensional Fokker-Planck equation. The Fokker-Planck equation emerges from a system of (Itô) stochastic differential equations, which models the evolution of a vectorial stochastic process comprised by the centre-of-mass position vector and the orientation (or configuration) vectors of the polymer chain. We discuss the existence of global-in-time weak solutions to the coupled Navier-Stokes-Fokker-Planck system. The numerical approximation of this high-dimensional coupled system is a formidable computational challenge, complicated by the fact that for practically relevant spring potentials the drift term in the Fokker-Planck equation is unbounded.

Endre Süli

University of Oxford; NZMS Forder LecturerDate: Thursday 16 April 2015

Numerical solution of PDEs is a rich and active field of modern applied mathematics. The steady growth of the subject is stimulated by ever-increasing demands from the natural sciences, engineering and economics to provide accurate and reliable approximations to mathematical models involving partial differential equations (PDEs) whose exact solutions are either too complicated to determine in closed form or, in many cases, are not known to exist. While the history of numerical solution of ordinary differential equations is firmly rooted in 18th and 19th century mathematics, the mathematical foundations of the field of numerical solution of PDEs are much more recent: they were first formulated in the landmark paper Über die partiellen Differenzengleichungen der mathematischen Physik (On the partial difference equations of mathematical physics) by Richard Courant, Karl Friedrichs, and Hans Lewy, published in 1928. The aim of the lecture is to survey several modern developments in the theory of finite difference methods for partial differential equations that rely on tools from functional analysis, harmonic analysis and function space theory.

Fabien Montiel

Department of Mathematics and StatisticsDate: Tuesday 31 March 2015

Christine Franklin

Visiting Fulbright scholar University of Auckland, University of GeorgiaDate: Thursday 26 March 2015

The United States is realizing the need to achieve a level of quantitative literacy for its graduates to prepare them to thrive in the modern world. Given the prevalence of statistics in the media and workplace, individuals who aspire to a wide range of positions and careers require a certain level of statistical literacy. Because of the emphasis on data and statistical understanding, it is crucial for us as educators to consider how we can prepare a statistically literate population. Students must acquire an adequate level of statistical literacy through their education beginning in the first grade of education.

The Common Core State Standards for mathematics (that include statistics) in grades Kindergarten – 12 have been adopted by most states and the District of Columbia. These national standards for the teaching of statistics and probability range from counting the number in each category to determining statistical significance through the use of simulation and randomization tests. Soon, and for the first time, most of our entering college students will have been taught some statistics and probability, so our introductory college and university statistics courses will have to change. In addition, we must rethink the preparation of future K–12 teachers to teach this curriculum. Change in teacher preparation must thus be implemented in order to respond to the call from society for an increase in statistical understanding.

This presentation will provide a brief history of statistics at K-12 in the United States, an overview of the statistics and probability content of these standards, resources that support the K-12 standards in statistics, consider the effect in our introductory university statistics courses, and describe the knowledge and preparation needed by the future and current K–12 teachers who will be teaching using these standards. A new American Statistical Association strategic initiative, the Statistical Education of Teachers, will be outlined and the desired assessment of statistics at K-12 on the high stakes national tests will be explored.

This is a joint seminar by the Department of Mathematics and Statistics and Otago Mathematics Association (OMA)

Phillip Wilcox

Scion (New Zealand Forest Research Institute Ltd); Department of BiochemistryDate: Thursday 12 March 2015

Timothy Williams

Nansen Environmental and Remote Sensing Centre, Bergen, NorwayDate: Wednesday 11 March 2015

In this talk I will present some preliminary results of coupling a directional scattering model of waves with sea ice break-up. The scattering model used is based on the multiple scattering model of Foldy (1945), and is the one used by many researchers (eg. Masson & LeBlond, 1987).

Retaining the scattered energy in the system (as it should be), instead of simply dissipating it as is currently done in early implementations of quasi-operational waves-in-ice models, has implications for the wave field in the ice, the amount of ice broken up by a given wave field that is arriving from the open ocean, and for momentum transfer from the waves to the ice.

I will also give some background to the problem of modeling waves-in-ice.