Evolving fluid boundaries: from lava to pancakes

Prof Mathieu Sellier

University of Canterbury

Date: Friday 13 August 2021

Joint Mathematics and Physics seminar

Many flows encountered in our daily lives involve a moving boundary. The shape of a raindrop, for example, evolves as it falls through the air. Likewise, the free surface of a river deforms as it encounters obstacles. While the mathematical ingredients required to describe such flows have been known since the late 19th century and are encapsulated in the Navier-Stokes equations, solving complex flows with a moving boundary or interface still poses significant challenges and provides stimulating cross-disciplinary research opportunities. The question at the centre of the research I will present is “if information about the evolution of a moving interface is available, can we indirectly infer unknown properties of the flow?” Such a question falls in the realm of inverse problems for which one knows the effect but is looking for the cause. Specifically, I will talk about how it is possible to estimate the fluid properties of lava just by looking at how it flows or what is the best way to rotate a pan to cook the perfect cr\^{e}pe.

210804105609

The Lottery Ticket Hypothesis

Brendan McCane

Otago Computer Science

Date: Tuesday 1 June 2021

In the last couple of years, empirical evidence has hinted that the lottery ticket hypothesis might be the reason why deep neural networks with millions or sometimes billions of parameters do not over-train. The lottery ticket hypothesis states that a sub-network of a large network will be just as good as the large network - it’s a winning ticket – and the emergence of such a sub-network is extremely probable. I will describe some of the empirical evidence for the lottery ticket hypothesis, and outline a more theoretical argument, based on dubious assumptions, that might even be considered a proof.

210520140623

Bose-Einstein condensates on bounded space domains

Robert A. Van Gorder

University of Otago

Date: Tuesday 25 May 2021

Predicted theoretically in the 1920s and finally discovered experimentally in the 1990s, Bose-Einstein condensates (BECs) continue to motivate work in theoretical and experimental physics. Although experiments on BECs are necessarily carried out in bounded space domains, most theoretical work modelling BECs involves solving the Gross-Pitaevskii equation (GPE) for the macroscopic wavefunction on infinite domains, since the combination of bounded domains and spatial heterogeneity renders commonly employed analytical approaches ineffective. In this talk, we discuss how to ``solve” the GPE for both stationary and time-varying BECs on bounded space domains, outlining two analytical methods for their approximation, and then comparing each method with direct numerical simulation of the GPE. We then employ these techniques to explore a variety of interesting BEC behaviors.

Students are welcome to attend!

210518105619

How are Mathematics and Statistics taught in New Zealand’s schools?

David Bryant

University of Otago

Date: Tuesday 18 May 2021

The government is currently making major changes to the mathematics and statistics curriculum for high schools, and reviewing the way that maths and stats are taught at all levels. I think that academics have a vested interest in getting involved in these discussions. In this talk I’m going introduce the NCEA system and discuss the ideas behind the current curriculum and assessment. I will talk about some of the new directions with curriculum, and a little of the context of the current Royal Society review. My plan is to keep things concise but hopefully accessible.

210513155341

A game of hats

Anindya Sen

Department of Accountancy and Finance

Date: Tuesday 4 May 2021

We start with a puzzle involving 100 prisoners wearing black and white hats of the type often asked in interviews. (I recommend trying it before coming to the seminar) It turns out the rather surprising solution can be generalized to any number of prisoners and any number of hat colours. But things get really interesting when we have infinitely many prisoners\dots

The Puzzle: A sadistic prison warden plays the following game with a group of 100 prisoners. They are to be lined up in the morning --- each of them wearing a hat which is either black or white --- in such a way that each prisoner can see the hats of everyone in front of him. Then the warden will from the back, loudly asking each prisoner ``What's the colour of your hat?'' and each prisoner has to shout out ``Black'' or ``White'' so that everyone can hear. If they say anything else they will be instantly executed. If the colour is correct they go free, else they are executed. (Yes, this is a bit morbid.) The prisoners are given one evening to discuss a strategy to save themselves as far as possible. What is the maximum number of prisoners who can be saved with certainty?

Surprisingly enough, the answer is ``All but one''.

210428152654

What happens when you shoot a gravitational wave at a black hole?

Chris Stevens

Department of Mathematics and Statistics

Date: Tuesday 27 April 2021

Recently, the first direct detections of gravitational waves -- ripples in the fabric of space-time -- have occurred. This is a momentous achievement, undoubtedly one of the greatest of mankind: the complex theoretical underpinning working in harmony with the huge multi-kilometer-long laser interferometers of LIGO, Virgo and KAGRA, which can detect a change in distance 1/10,000th the width of a proton, is astounding, and led to the 2017 Nobel prize in physics. The most powerful source of gravitational radiation comes from the collision of two black holes. This process distorts space and time on an unimaginable scale and wave bursts releasing energy more than $10^{30}$ times the largest man made explosion have been detected. What happens when this burst of radiation hits a lone black hole? This talk explores a number of recent analytical and numerical results, which although very general, are ideal to study certain aspects of this question. An initial boundary value problem is presented which is solved numerically and has the very nice property of including "null infinity" (where gravitational waves are unambiguously defined) in the computational domain. A generalization of the Bondi 4-momentum integral formula is presented and the Bondi energy is calculated.

210421145020

Applications of semiclassical analysis in harmonic analysis

Melissa Tacy

University of Auckland

Date: Tuesday 13 April 2021

Semiclassical analysis arose as a set of techniques for studying the high energy (or semiclassical) limit of quantum mechanics. These techniques however can be used for a wide range of problems in PDE and harmonic analysis that feature a large (or small) parameter. In this talk I will discuss some of the applications of semiclassical analysis to harmonic analysis and the intuitions that drive this theory.

210407123439

Tree rearrangement operations on discrete time-trees

Lena Collienne

Computer Science University of Otago

Date: Tuesday 30 March 2021

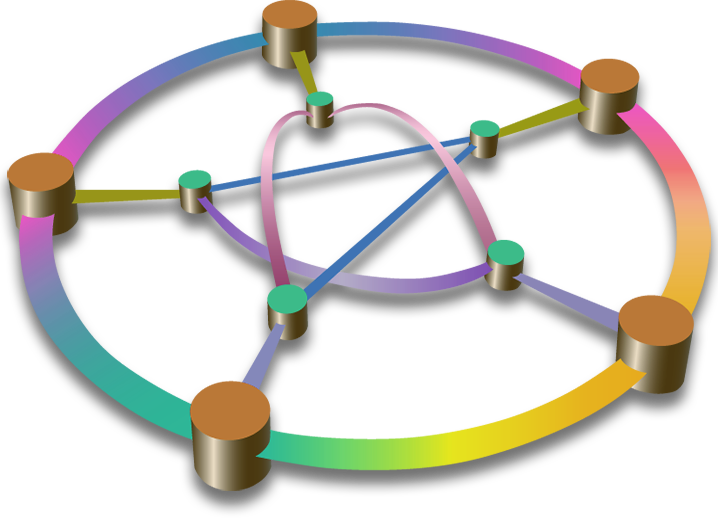

Many popular algorithms for searching the space of leaf-labelled (phylogenetic) trees are based on tree rearrangement operations. Under any such operation, the problem is reduced to searching a graph where vertices are trees and (undirected) edges are given by pairs of trees connected by one rearrangement operation. Most popular are the classical nearest neighbour interchange, subtree prune and regraft, and tree bisection and reconnection moves. The problem of computing distances, however, is NP-hard in each of these graphs, making tree inference and comparison algorithms challenging to design in practice. Although phylogenetic time-trees are one of the central objects of interest in applications such as cancer research, immunology, and epidemiology, the computational complexity of the shortest path problem for these trees remained unsolved for decades. In this talk, we introduce tree rearrangement operations on discrete coalescent trees, which are a discretisation of time-trees, where internal nodes are equipped with times. We show that shortest paths under this operation can be computed in polynomial time, and establish several geometrical properties of the tree space. In particular, we will also consider ranked trees as a special case of discrete coalescent trees.

210324153906

No-flux boundary conditions for jumping particles

Lorenzo Toniazzi

University of Otago

Date: Tuesday 23 March 2021

A standard model for diffusion in an interval [[0,1]] with no-flux boundary conditions is the heat equation $\partial_t p =\partial^2_x p$ with Neumann conditions $\partial_x p=0$ at 0 and 1. A probabilistic equivalent model is a Brownian motion that cannot flow outside of [[0,1]] due to 'walls placed' at 0 and 1. Or one could give at least five different modifications of the trajectories of the Brownian motion that result in the same no-flux diffusion model on [[0,1]]. Instead, the picture is very different if we try to model super-diffusion (mean-square-displacement faster that linear). This is because, rather than the local second derivative $\partial^2_x$, we need to treat nonlocal integro-differential operators. The probabilistic viewpoint here is that particles can jump (hence the nonlocality) and this implies that many different ways to impose no-flux boundary conditions are possible. So we first give an overview of several of the possible models for super-diffusion on [[0,1]] with no-flux boundary conditions and their applications in finance, hydrology and queues. Then we present our recent work characterising Neumann and fast-forwarding boundary conditions for many Lévy jump processes. Here fast-forwarding means allowing the particle to move freely in and out of the domain but disregarding the time spent outside. This talk will avoid most technicalities and provide many simulations to illustrate the results.

210318152750

Sea ice: the next generation

Chris Horvat

Brown University USA

Date: Tuesday 16 March 2021

The polar oceans, those covered by sea ice at least during some part of the year, cover less than 7% of Earth's surface area, yet have the greatest pound-for-pound impact on Earth's energy budget. The rapid decline of summer/fall Arctic sea ice, a change in just 0.3% of Earth's surface, has increased planetary warming by 25% of total anthropogenic greenhouse gas emissions. Yet polar oceans are challenging to observe, describe, and model for many reasons, not least of which is their remoteness. As a consequence, I'll show that climate models remain unable to represent the loss of sea ice in any month of the year, even accounting for internal variability and differences between climate models - and exhibit wide inter-model variability in mean sea ice state. Major changes are needed in how sea ice and the polar oceans are understood, observed and modeled. I'll talk about new efforts to do this - observations from the ICESat-2 and CryoSat-2 altimeters of waves propagating through and fracturing sea ice, new modeling and observations of wide-spread under-ice phytoplankton blooms, and of sub-mesoscale mixed-layer variability energized by sea ice fragmentation. Together these indicate that sea-ice-covered regions are far more interesting and heterogeneous than climate models give them credit for. With the failure of sea ice models to capture sea ice's response to global warming, these advances motivate the advent of a new generation of coupled sea ice models. I'll then talk about the Scale Aware Sea Ice Project, a new initiative with colleagues in the US, UK, France, and Norway, where we will develop a new multi-scale sea ice model --- one where the fractured and heterogeneous nature of sea ice and its coupling with the ocean and surface waves are at the forefront.

210308123916

Dependencies within and among Forensic Match Probabilities

Bruce Weir

Department of Biostatistics, University of Washington

Date: Friday 5 February 2021

DNA profiling has become an integral tool in forensic science, with widespread public acceptance of the power of matching between an evidence sample and a person of interest. The rise of direct to consumer genetic profiling has extended this acceptance to findings of matches to distant relatives of the perpetrator of a crime. Along with the greater discriminating power of profiles as forensic scientists have moved from Alec Jeffreys’ ``DNA fingerprinting'' to next-generation sequencing, has come the need to re-examine the usual assumptions of independence among the components of forensic profiles. It may still be appropriate to regard variants at a single marker as being inherited independently, but it is doubtful that all 40 components in a 20-locus STR profile are independent, let alone neighbouring sites in an NGS profile. The very basis for forensic genealogy is that human populations contain many pairs of distant relatives, whose profile probabilities are not independent. The expansion of forensic typing to include Y-chromosome and mitochondrial markers and protein variants raises even further questions of independence. These issues will be discussed and illustrated with forensic and other genetic data, all within a re-examination of the concept of identity by descent and current work to estimate measures of identity within and between individuals, and within and between populations.

210201131314

Conformal geometry and taming infinity

Prof Rod Gover

Department of Mathematics, University of Auckland

Date: Thursday 3 December 2020

The world around us appears to involve lengths and angles. From these emerge the classical notions of shape and symmetry configured in 3 dimensions. Our mathematical ancestors realised that these notions should be important, not only for construction and surveying, but also for understanding "life, the universe and everything". In the process of simplifying complicated structures it turns out that an important role is played by conformal geometries -- these are spaces where there is a notion of angle but not length. We will discuss some elegant tools for working with these less than rigid geometries and how they help treat other problems such as compactification. This is the theory behind making non-compact spaces compact by adding points in some appropriate way. There are applications to the representation theory of groups, geometric analysis, and physics.

201127112249

Bayesian sequential inference (filtering) in a functional tensor train representation.

Colin Fox

Physics University of Otago

Date: Tuesday 17 November 2020

Colin Fox (Physics, Otago), joint work with Sergey Dolgov (Mathematics, Bath) Bayesian sequential inference, a.k.a. optimal continuous-discrete filtering, over a nonlinear system requires evolving the forward Kolmogorov equation, that is a Fokker--Planck equation, in alternation with Bayes’ conditional updating. We present a numerical grid-method that represents density functions on a mesh, or grid, in a tensor train (TT) representation, equivalent to the matrix product states in quantum mechanics. By utilizing an efficient implicit PDE solver and Bayes' update step in TT format, we develop a provably optimal filter with cost that scales linearly with problem size and dimension. This ability to overcome the `curse of dimensionality' is a remarkable feature of the TT representation, and is why the recent introduction of low-rank hierarchical tensor methods, such as TT, is a significant development in scientific computing for multi-dimensional problems. The only other work that gets close to the scaling we demonstrate in high-dimensional settings is due to Stephen S.T. Yau and his Fields-medallist brother Shing-Tung Yau. We give a gentle introduction to filtering, functional tensor train representations and computation, present some examples of filtering in up to 80 dimensions, and explain why we can do better than a Fields medallist.

201110160229

Toward building a conceptual model on wave transformation over shore platforms and Wave transformation on a fringing reef system with spurs and grooves structures

Raphael Krier and Cesar Acevedo Ramirez

Department of Geolgraphy University of Otago

Date: Tuesday 20 October 2020

This research focuses on wave transformation over intertidal shore platforms. Intertidal shore platforms act as natural buffers dissipating wave energy in rocky shore environments. In the context of sea level rise and increasing storminess, cliff erosion and over wash hazards are rising issues in rocky shore environments. The understanding of wave transformation represents a first step toward the characterisation of rock coast erosion mechanisms in response to a changing climate. Unlike sandy beaches, characterised by a seasonal or inter-annual erosion/accretion cycle, cliff erosion is an irreversible process threatening rock coasts which represent 80% of the world’s coastline and 23% of New Zealand’s coastline. Wave transformation on shore platforms has mostly been studied over linear transects which limited previous observations to two dimensions. The aim of my research is to redefine shore platform hydrodynamic processes in three dimensions and assess the effects of platform morphology on wave transformation. This will allow to establish conceptual models coupling morphology and hydrodynamics. These types of models are well developed on sandy beaches, but currently lacking in rocky shore environments and the information they provide can be useful to assess the level of hydrodynamic forcing acting on different rocky shores and, subsequently, cliff erosion rates.

$\cdots$

Spurs and Grooves (SAG) structures can be found on coral reefs around the world. However, there are few studies that relate SAG morphology with wave transformation process. Using an array of pressure sensors and current meters deployed over 10 days we present observations of wave dissipation over SAG structures at Xahualxol, Quintana Roo, Mexico. This site has a different morphology of SAG compared to previous studies. Our results indicate that SAG structures are more important in wave transformation than has previously been reported. The rate of dissipation (up to 80W/m2) and the wave dissipation friction factor, fw, (1.1) found, are also high compared to previous values found on other reefs. We also found that the wave dissipation rate over the spurs can be up to three times higher than in the adjacent grooves. This study demonstrates that SAG morphologies play a discernable role in wave dissipation over the forereef.

201012121910

Reconstructing the evolution of bayesian brains by computational modelling

Mike Paulin

Department of Zoology University of Otago

Date: Tuesday 13 October 2020

Placozoans are the smallest, simplest animals on Earth. They are microscopic patches of bacteria-eating epithelium, which evolved about 560 million years ago, were the first animals that moved, and may be ancestors of all modern animals with nervous systems, i.e. of all animals except sponges and placozoans themselves. I will show, using realistically constrained biophysical models and simulations, how placozoan’s ability to perceive bacteria by contact chemosensing (taste) can be extended in a remarkably simple way to perceive salient aspects of the world beyond their body surface, by constructing a map in their epithelial margin of the Bayesian posterior density of external signal sources given receptor states. This leads to an elegant and possibly true model of statistically optimal perception, decision-making and movement control at the algorithmic level, in a ridiculously simple animal without a nervous system. The algorithm can be ported to spiking neural networks in a straightforward way. This may be a basic computational motif which has evolved by duplication, specialization and recursion (i.e. observer modules passing inferences to each other as priors and data) to produce nervous systems capable of dynamical statistical inference, planning and control in animals like us, with complex anatomy living in complex modern ecosystems.

201009130855

3D Models From Radiographs

Dr Hamza Bennani

Department of Computer Science at Otago

Date: Tuesday 6 October 2020

This research investigates an accurate method for three dimensional (3D) reconstruction of the human spine from bi-planar radiographs with comparable results to CT scans or MRI. In this work, we generated a publicly available dataset which corresponds to the training data used. We subsequently solved the problem of correspondences using a landmark-free algorithm applied on the vertebrae. Finally, we developed a semi automatic method based on simulated radiographs for the reconstruction of the human lumbar spine in 3D from bi-planar radiographs.

We validated the results in vitro on radiographs of dried vertebrae with models constructed from a laser-scanner, then in vivo on radiographs of living patients with models extracted from CT scans or MRI.

The results show the feasibility of generating personalised models of patients from bi-planar radiographs.

The contributions are:

- Evaluation of the methods for creating 3D models of vertebrae and estimation of the errors in comparison with ground truth data. These methods are applicable to other free-form shapes;

- Creation of landmark free ASMs of lumbar vertebrae;

- Definition and evaluation of a process for estimating the shape and position of lumbar spine from uncalibrated bi-planar radiographs.

200930131300

The ladder of infinity

Anindya Sen

Department of Accountancy and Finance University of Otago

Date: Tuesday 29 September 2020

Students of mathematics learn early on that infinity comes in at least two sizes - countable and uncountable. However, that is merely the tip of the iceberg. In this lecture, I will talk about how we can generate the "ladder of infinity" - an infinite sequence of ever larger infinities that stretches to unimaginable heights. On the way we shall meet ordinal numbers, the well ordering principle and ZFC axioms. If time permits, we will touch on advanced topics like inaccessible cardinals which are the subject of modern research.

200923160556

The combinatorics of ‘capturing’ a phylogenetic tree from discrete data or distances

Mike Steel

University of Canterbury

Date: Tuesday 11 August 2020

We consider two versions of the following question: What is the smallest amount of ‘data’ required to uniquely determine a phylogenetic (evolutionary) tree? In the first version, the ‘data’ consists of a sequence of discrete states observed at the leaves of a tree, and these states are assumed to have evolved from an unknown ancestral state; either with or without homoplasy. For the second version, the data consists of leaf-to-leaf distances between certain pairs of leaves in the tree. Both questions give rise to some interesting combinatorial subtleties.

200807154023

Initial data sets from parabolic-hyperbolic formulations of the Einstein vacuum constraints

Joshua Ritchie

Mathematics and Statistics Department University of Otago

Date: Tuesday 21 July 2020

The constraint equations are a subset of the full Einstein equations that describe the universe at a `fixed moment of time'. In this talk, we investigate the generic asymptotic behaviour of vacuum initial data sets constructed as solutions of an evolutionary formulation of the constraint equations. Our main focus here is on the construction of `physically relevant' solutions of the constraint equations. To do this we introduce a method that allows us to construct binary black hole solutions.

200717103938

Inner Products on Convex Sets

David Bryant

Mathematics and Statistics, University of Otago

Date: Tuesday 17 March 2020

I've recently been trying to model how ecological niches change over evolutionary time. This lead to a bunch of thorny mathematical problems related to random convex sets, like how should we think about a random process when the state space is the set of (bounded, closed, convex) random sets? how do we do statistics on these things? and how can we compute with them? It turns out that progress can be made on some of these questions by framing the space of bounded, closed, convex sets as a kind-of vector space. We can add sets, and we can scale them. But X - X doesn't equal zero when |X|>1. I'll talk about some of the mathematics of these spaces and about our attempts to define an appropriate inner product. This is joint work with Petru Cioica-Licht, Lisa Orloff-Clarke and Rachael Young.

200310161353

Adapting analysis/synthesis pairs to pseudodifferential operators

Melissa Tacy

Mathematics and Statistics Department University of Otago

Date: Tuesday 10 March 2020

Many problems in harmonic analysis are resolved by producing an analysis/synthesis of function spaces. For example the Fourier or wavelet decomposition. In this talk I will discuss how to use Fourier integral operators to adapt analysis/synthesis pairs (developed for the constant coefficient PDE case) to the pseudodifferential setting. I will demonstrate how adapting a wavelet decomposition can be used to prove $L^{p}$ bounds for joint eigenfunctions.

200304104113

Supporting cooperation via agreement equilibrium

Ronald Peeters

Economics Department University of Otago

Date: Tuesday 3 March 2020

We introduce 'agreement equilibrium' as a novel solution concept that can explain the abundance of cooperative behavior that is often observed in laboratory experiments in various contexts. The main idea of the agreement equilibrium is to identify behaviors that individuals can (tacitly) agree on while being ambiguous about their opponents' intentions to respect or to betray this (tacit) agreement. We investigate properties of the agreement equilibrium, including in comparison to other equilibrium concepts, and illustrate the agreement equilibrium in a series of famous applications.

200302134134

Nonlinear Dirac equations

Sebastian Herr

Bielefeld University

Date: Tuesday 11 February 2020

In this talk I will start by introducing the Dirac equation as a dispersive PDE and draw a connection to the Restriction Problem in Harmonic analysis. Then, I will turn to nonlinear Dirac equations, such as the Soler model and the Dirac-Klein-Gordon system. Finally, I will describe some recent progress on the regularity theory of the corresponding Cauchy problems and discuss some open questions.

200207111115

Dynamical low-rank approximation

Christian Lubich

Mathematisches Institut Universitaet Tuebingen

Date: Tuesday 12 November 2019

This talk reviews differential equations on manifolds of low-rank matrices or tensors or tree tensor networks. They serve to approximate, in a data-compressed format, large time-dependent matrices and tensors that are either given explicitly via their increments or are unknown solutions of high-dimensional differential equations, such as multi-particle time-dependent Schr\"odinger equations or kinetic equations such as Vlasov equations. Recently developed numerical time integrators are based on splitting the projector onto the tangent space of the low-rank manifold at the current approximation. In contrast to all standard integrators, these projector-splitting methods are robust with respect to the unavoidable presence of small singular values in the low-rank approximation. This robustness relies on geometric properties of the low-rank manifolds. The talk is based on work done intermittently over the last decade with Othmar Koch, Bart Vandereycken, Ivan Oseledets, Emil Kieri and Hanna Walach.

191108135544

Numerical simulation of slow slip events

Yiming Ma

Mathematics and Statistics, University of Otago

Date: Tuesday 8 October 2019

Slow slip events (SSEs), a type of slow earthquakes, play an important role in releasing strain energy in subduction zones, where a tectonic plate bends and slides under another one. Observations of their occurrence patterns can be used to infer the probability of triggering a damaging earthquake at the interface between the two plates. However, the underlying geophysical mechanisms governing SSEs are still not well understood. In this talk, I will introduce a physical model to simulate periodic SSEs, based on dislocation theory and rate- and state-dependent friction (RSF) law. I will further discuss the sensitivity of the model to the parameters (e.g. consititutive parameters, geometry of the fault) and some computational issues associated with the numerical scheme implemented.

191001110246

Whakatipu te Mohiotanga o te Ira: Growing Māori capability and content in genetics-related education

Phillip Wilcox

Mathematics and Statistics, University of Otago

Date: Thursday 3 October 2019

This Seminar will focus on recent efforts at the University of Otago to increase (a) Māori content in statistics, genetics and biochemistry courses, and (b) Māori involvement in genetics-based research and applications.

191001110542

Spherical Splits

Tom McCone

Department of Mathematics and Statistics

Date: Tuesday 1 October 2019

Suppose we have some points on the surface of a sphere, and a plane passing through the sphere (but through none of the points). Naturally, the points will be partitioned into two sets. When we consider the collection of all such possible partitions, an interesting question arises: How does the structure of the collection relate to the positions of the points (and vice versa)? Motivated by problems in data analysis, the idea of such a collection leads us to investigate connections through a range of mathematical fields, including convex geometry and graph theory, and leaves us with a handful of intriguing questions requiring further thought.

190925125536

Meta-benchmarking: what can we learn by comparing benchmarks?

Paul Gardner

Department of Biochemistry, Otago

Date: Tuesday 24 September 2019

In the field of bioinformatics, software proliferation has become a significant issue for researchers in the field. There are hundreds of software tools for addressing problems in phylogenetics, genome assembly and protein structure prediction. As a consequence, just selecting a tool and parameter settings is a significant challenge for researchers. Consequently, neutral software benchmarks are the gold-standard for determining what software tools are optimal for addressing specified problems. Yet, are benchmarks themselves reliable, and could these be driving suboptimal practices in software development?

190918094034

Making waves discretely by putting balls into boxes and using crystals

Travis Scrimshaw

University of Queensland

Date: Tuesday 17 September 2019

In August, 1834, John Scott Russell followed a wave traveling through a narrow channel and noticed that as the wave propagated, it did not change shape nor speed. This observation was then given a mathematical theory starting with Boussinesq in 1871, and is now known as the Korteweg-de Vries (KdV) equation, a nonlinear partial differential equation. In particular, the KdV equation admits solutions for such waves, which are called solitons. We first can make the time steps discrete, which was done by Hirota in 1977, and we will make the height and position of a wave discrete following Takahashi and Satsuma. Indeed, by using boxes that can hold at most one ball in a simple discrete dynamical system, they relate the size of a wave to a coupled collection of balls. In this talk, we will discuss the Takahashi-Satsuma box-ball system and how it can be described using Kashiwara's crystal bases, a combinatorial interpretation of representation theory arising from mathematical physics (specifically, quantum groups). This allows the system to be generalized and more tools from mathematical physics to be applied, which will also be described as time permits.

190911145730

Multiscale Methods for Modelling Intracellular Processes

Radek Erban

University of Oxford

Date: Tuesday 10 September 2019

: I will discuss mathematical and computational methods for spatio-temporal modelling in molecular and cell biology, including all-atom and coarse-grained molecular dynamics (MD), Brownian dynamics (BD), stochastic reaction-diffusion models and macroscopic mean-field equations. Microscopic (BD, MD) models are based on the simulation of trajectories of individual molecules and their localized interactions (for example, reactions). Mesoscopic (lattice-based) stochastic reaction-diffusion approaches divide the computational domain into a finite number of compartments and simulate the time evolution of the numbers of molecules in each compartment, while macroscopic models are often written in terms of mean-field reaction-diffusion partial differential equations for spatially varying concentrations. In the first part of my talk, I will discuss connections between these different modelling frameworks, considering chemical reactions both at a surface and in the bulk. In the second part of my talk, I will discuss the development, analysis and applications of multiscale methods for spatio-temporal modelling of intracellular processes, which use (detailed) BD or MD simulations in localized regions of particular interest (in which accuracy and microscopic details are important) and a (less-detailed) coarser model in other regions in which accuracy may be traded for simulation efficiency. I will discuss error analysis and convergence properties of the developed multiscale methods, their software implementation and applications of these multiscale methodologies to modelling of intracellular calcium dynamics, actin dynamics and DNA dynamics. I will also discuss the development of multiscale methods which couple MD and coarser stochastic models in the same dynamic simulation.

190902160311

Time inconsistency in games

Ronald Peeters

Economics Department

Date: Tuesday 3 September 2019

Decision makers with time-inconsistent preferences have been studied in great detail in recent decades. Addressing the dearth of literature on time inconsistency in a strategic context, our work provides the foundations for dealing with two-player games with naive and sophisticated players. We model various types' beliefs explicitly, and then proceed to define equilibrium notions based on these belief hierarchies. Second-order beliefs of naive players turn out to be crucial, and lead to different equilibria even in relatively simple games. We provide several examples, including models of bargaining and bank runs, showing the applicability of our framework.

190822101839

Conditions of Wilf equivalence

Jinge Lu

Otago Computer Science

Date: Tuesday 20 August 2019

Two classes of combinatorial structures are said to be Wilf-equivalent if they contain the same number of structures of each size. In this talk we examine Wilf equivalences in permutation classes from two different directions. First, given some small classes, we determine the conditions of Wilf equivalence among its principal subclasses. Second, we determine what a class would look like, if we assume the maximum (or near maximum) extent of Wilf equivalences among its principal subclasses.

190813095405

Modelling the coupled ocean waves/sea ice system while making sense of the data: what’s the challenge?

Fabien Montiel

Department of Mathematics and Statistics

Date: Tuesday 13 August 2019

Observations of ocean waves breaking up sea ice floes in the ice-covered Southern Ocean date back to the early 20th century and Sir Ernest Shackelton’s famous Endurance expedition. Recent evidence now suggests that this very process could be a key driver of sea ice extent and morphology, and therefore impact the global climate system. Describing, let alone modelling, the range of physical processes governing the coupled ocean waves/sea ice system is not an easy task, mainly due to the difficulty of collecting data in such a harsh environment. This is therefore the perfect playing field for applied mathematicians to propose highly idealised models, ranging from sea ice as a homogeneous viscoelastic material to more sophisticated models of wave scattering by large arrays of perfectly circular floes. Due to its relevance to the climate system as well as to the shipping industry, theoretical research on ocean waves/sea ice linkages has been burgeoning in recent years and has attracted much funding worldwide. This talk is an attempt to reflect on these recent developments (including my own work), which in some cases are driven by the need to incorporate some kind of representation of the system in large scale forecasting models as opposed to trying to understand the underlying physics. I will further discuss results from recent field work data that seem to challenge our current modelling approaches.

190807154223

Nodal sets and conformal geometry

Dmitry Jakobson

McGill University

Date: Tuesday 6 August 2019

I will explain how nodal sets of Laplace eigenfunctions give rise to conformal invariants. The talk will be self-explanatory. Movies will be shown.

190805134854

computer vision for culture and heritage

Steven Mills

Department of Computer Science

Date: Tuesday 30 July 2019

In this talk I will present some of our recent and ongoing work, with an emphasis on cultural and heritage applications. These include historic document analysis, 3D modelling for archaeology and recording the built environment, and tracking for augmented spectator experiences. I will also outline some of the outstanding issues we have where collaboration with mathematicians and statisticians might be valuable.

190724111803

Paraconsistent logic and inconsistent mathematics

Zach Weber

Philosophy Department University of Otago

Date: Tuesday 23 July 2019

There are nowadays many different well-understood systems of logic: classical, intuitionistic, and paraconsistent, to name a few. This introductory talk will explain some of the motivations for studying paraconsistent logic—systems of formal logic developed since the 1970s that make it possible to have some local inconsistency without global absurdity. We will look at some of the basic details of how a paraconsistent logic works in practice, and apply it to some elementary foundational mathematics, in particular the original ‘naive’ set theory of Cantor and Dedekind, and some point-set topology. I’ll conclude with a brief discussion of the place of non-classical logic and prospects for the wider inconsistent mathematics program as it stands today.

190716134613

Condorcet Domains Satisfying Arrow's Single-Peakedness

Arkadii Slinko

Department of Mathematics. University of Auckland

Date: Tuesday 9 July 2019

Condorcet domains are sets of linear orders with the property that, whenever the preferences of all voters belong to this set, the majority relation of any profile with an odd number of voters is transitive. Maximal Condorcet domains historically have attracted a special attention. We study maximal Condorcet domains that satisfy Arrow's single-peakedness which is more general than Black's single-peakedness. We show that all maximal Black's single-peaked domains on the set of m alternatives are isomorphic but we found a rich variety of maximal Arrow's single-peaked domains. We discover their recursive structure, prove that all of them have cardinality 2^{m-1}, and characterise them by two conditions: connectedness and minimal richness. We also classify Arrow's single-peaked Condorcet domains for up to 5 alternatives.

190626143334

Image reconstruction and unique continuation properties

Leo Tzou

University of Sydney

Date: Tuesday 11 June 2019

A classical result of Jerison-Kenig showed that the optimal assumption for unique continuation properties for elliptic PDE. In this talk we will explore its connection to image reconstruction with impedance tomography. We will develop an analogous theory in the context of partial data inverse problems to obtain the same sharp regularity assumption as Jerison-Kenig. The method we use involves explicit microlocal construction of the Dirichlet Green's function which on its own may be of interest for partial data image reconstruction.

190529141321

Taming the beast of the cosmological big bang singularity: Dynamics and degrees of freedom

Florian Beyer

Mathematics and Statistics, University of Otago

Date: Tuesday 28 May 2019

The history of the universe, in particular its very beginning at the ``big bang'', is one of the great unsolved mysteries in science. It is modelled mathematically by solutions of Einstein's equations, the complex equations of general relativity first envisioned by Albert Einstein in 1915. Despite recent successes to prove certain stability results for the singular dynamics, there are many open issues whose resolutions would require a much stronger control of the asymptotics than so far possible with rigorous PDE techniques. One of these outstanding problems is to understand the relationship between asymptotics and degrees of freedom for singular hyperbolic PDE systems. To address this we have recently introduced a rigorous matching technique which can yield a topological characterisation of how the degrees of freedom are encoded in the asymptotics. In the particular case of Einstein's equations, this could eventually answer fundamental questions: What are the general degrees of freedom to ``create a universe''? How large were the chances for our universe to turn out exactly the way it has?

190522134923

Rational points on curves

Brendan Creutz

School of Mathematics and Statistics, University of Canterbury

Date: Tuesday 14 May 2019

Many interesting problems in number theory are related to finding rational solutions to polynomial equations, a famous example being Fermat's Last Theorem. The real or complex solutions to such equations yield familiar geometric objects (curves, surfaces, etc) and in many cases the qualitative nature of the set of rational solutions is determined by the geometry. In this talk I will give a gentle introduction to this perspective in the case of polynomials of two variables.

190507135722

Wilf-equivalence and Wilf-collapse.

Michael Albert

University of Otago Computer Science

Date: Tuesday 7 May 2019

Enumerative coincidences abound in combinatorics -- perhaps the most famous being the huge collection of different classes which are enumerated by the Catalan numbers. While some have dismissed these coincidences as arising from nothing more than the human penchant for simplicity it does seem reasonable to ask: ``Are there contexts in which a large number of coincidences should be expected?''. Enumerative coincidences that occur between collections of structures avoiding some particular substructure have been called ~~Wilf-equivalences~~. For instance, the collection of permutations avoiding the pattern 312 is one of those enumerated by the ubiquitous Catalan numbers whose growth is $\Theta(n^{-3/2} 4^n)$. What about permutations that avoid 312 and one additional pattern of size $n$? There are only $o(2.5^n)$ distinct Wilf-equivalence classes -- a ~~Wilf-collapse~~. A more thorough investigation of this phenomenon leads to the conclusion that the combination of at least one non-trivial symmetry and a greedy algorithm for detecting the occurrence of a pattern leads to Wilf-collapse quite generally.

190502124540

The geometry behind MRD codes

Geertrui van de Voorde

School of Mathematics and Statistics University of Canterbury

Date: Tuesday 16 April 2019

Rank-metric codes are widely seen in a variety of applications ranging from storing information in the cloud to public-key cryptosystems. For over thirty years, the only optimal rank-metric codes known were Gabidulin codes. This changed when Sheekey recently constructed a new family of optimal rank-metric codes (MRD codes) using objects from finite geometry called linear sets on a projective line. In this talk, I will explain the interplay between rank-metric codes and linear sets.

190403114112

Optimising the performance of wind turbines using computational fluid dynamics

Sarah Wakes

Department of Mathematics and Statistics

Date: Tuesday 9 April 2019

Two cases studies are presented that look at optimising the performance of two scales of wind turbines. The first is work undertaken with local business PowerHouse Wind to understand the flow behaviour over their unique one blade small scale horizontal axis wind turbine. Soft stall on the blade is applied through varying the speed of the rotor with an electric brake and is used to regulate power output and mitigate against damaging winds. Two- and three- dimensional air flow simulations were undertaken as well as visualisation of stall patterns on a working blade. This work allowed prediction of power output of the blade over a range of wind and rotor speeds. A larger next generation blade has also been studied to aid in the optimisation of the power output and blade design. The second case study is work undertaken with University of Waikato using machine learning techniques to predict the wake from a large-scale wind turbine and wind flow over a complex topography. The ultimate aim is to use Computational Fluid Dynamics with machine learning to optimise wind farm layouts over complex topographies.

190327131340

Dispersive PDE and the restriction problem

Tim Candy

Department of Mathematics and Statistics

Date: Tuesday 2 April 2019

A dispersive equation is a partial differential equation (PDE) for which solutions at different wavelengths propagate at different velocities (or directions). An important consequence of this is that the amplitude of solutions decays, while the energy or mass can remain conserved. Important examples of dispersive PDE include the wave equation, the KdV equation, and the Schrödinger equation. In the 70's and 90's it was observed that dispersion implies global space time estimates known as Strichartz estimates, these estimates are closely connected to the restriction problem in harmonic analysis. In this talk we will review this connection, explain how these estimate can applied to study nonlinear dispersive PDE, and cover some recent developments on bilinear restriction estimates and the wave maps equation.

190328140937

Projective Characters of Metacyclic p-Groups

Conor Finnegan

University College Dublin

Date: Tuesday 26 March 2019

The projective characters of a group provide us with important information regarding the structure and properties of the group. The purpose of this research was to find the projective character tables of metacyclic p-groups. This aim was achieved for metacyclic p-groups of positive type, but not in the negative type case. In this talk, I will give an introductory overview of some of the fundamental methods and results in projective representation theory. I will then discuss the application of these methods to metacyclic p-groups of positive type, using the previously understood abelian case as an example.

190318153651

CEBRA: mathematical and statistical solutions to biosecurity risk challenges

Andrew Robinson

University of Melbourne

Date: Thursday 21 March 2019

CEBRA is the Centre of Excellence for Biosecurity Risk Analysis, jointly funded by the Australian and New Zealand governments. Our problem-based research focuses on developing and implementing quantitative tools to assist in the management of biosecurity risk at national and international levels. I will describe a few showcase mathematical and statistical projects, underline some of our soaring successes, underplay our dismal failures, and underscore the lessons that we've learned.

190311141043

Folding, surprise and playing games: deep learning at the CS department

Lech Szymanski

Department of Computer Science, University of Otago

Date: Tuesday 19 March 2019

This talk will give an overview of the research done by the deep learning group at the Department of Computer Science. Specifically, I will talk about the work in three different areas: theoretical analysis of deep architectures using folding transformations, reinforcement learning with surprise, and teaching a deep network to play Atari games without catastrophic forgetting.

190214131558

Pattern formation in reaction-diffusion systems on time-evolving domains

Robert van Gorder

Department of Mathematics and Statistics, University of Otago

Date: Tuesday 12 March 2019

The study of instabilities leading to spatial patterning for reaction-diffusion systems defined on growing or otherwise time-evolving domains is complicated, since there is a strong dependence of spatially homogeneous base states on time and the resulting structure of the linearized perturbations used to determine the onset of stability is inherently non-autonomous. We obtain fairly general conditions for the onset and persistence of diffusion driven instabilities in reaction-diffusion systems on manifolds which evolve in time, in terms of the time-evolution of the Laplace-Beltrami spectrum for the domain and the growth rate functions, which result in sufficient conditions for diffusive instabilities phrased in terms of differential inequalities.

These conditions generalize a variety of results known in the literature, such as the algebraic inequalities commonly used as sufficient criteria for the Turing instability on static domains, and approximate or asymptotic results valid for specific types of growth, or for specific domains.

190214131405

Russell Higgs

School of Mathematics and Statistics, University College Dublin

Date: Tuesday 5 March 2019

This will be a survey talk discussing three open conjectures concerning the degrees of irreducible projective representations of finite groups. First a review of ordinary representations will be given with illustrative examples, before considering projective representations. A projective representation of a finite group $G$ with 2-cocycle $\alpha$ is a function $P:G \rightarrow GL(n, \mathbb{C})$ such that $P(x)P(y) = \alpha(x, y)P(xy)$ for all $x, y\in G$, where $\alpha(x, y)\in \mathbb{C}^*.$ One of the conjectures is can one conclude that $G$ is solvable given that the degrees of all its irreducible projective representations are equal.}

190214131235

The geometry and combinatorics of phylogenetic tree spaces

Alex Gavryushkin

Department of Computer Science University of Otago

Date: Tuesday 16 October 2018

The space of phylogenetic (aka evolutionary) trees is known to have a unique and non-trivial geometry with complicated combinatorial properties. Despite the recent major advances in our understanding of the tree space, a number of gaps remain. In this talk I will concentrate on a specific instance of phylogenetic trees called time-trees (aka dated trees), where internal nodes of the tree are ranked with respect to their time. This class of trees inherits some of the properties of classic, non-ranked, trees. However, some of the fundamental properties of the space (seen as a metric space), including its curvature, computational complexity, and neighbourhood growth function, are significantly different. These differences call for further investigations of these properties, which have a potential to become a stepping stone for new efficient phylogenetic inference methods. In this talk I will introduce all necessary background, present some of our results in this direction, and conclude with the exciting opportunities this area has to offer in computational geometry, combinatorics, and complexity theory.

181012104548